Last Updated on May 27, 2024 by Arnav Sharma

Azure OpenAI Service is a robust platform that offers access to some of OpenAI’s most advanced language models, including the GPT-4, GPT-35-Turbo, and Embeddings model series. With the recent introduction of the GPT-4 and gpt-35-turbo models to general availability, users can harness these models for a wide range of tasks. These tasks encompass content generation, summarization, semantic search, and even translating natural language into code. The service is accessible through various means, including REST APIs, a Python SDK, and a web-based interface known as the Azure OpenAI Studio. This versatility ensures that users, regardless of their technical proficiency, can easily engage with and benefit from the platform’s capabilities.

The Azure OpenAI Service is not just about advanced AI capabilities; it also emphasizes user experience and safety. The platform provides a range of models, each tailored for specific tasks and price points. For instance, the DALL-E models, which are currently in preview, can generate images based on text prompts, while the Whisper models can transcribe and translate speech to text. Beyond these features, Microsoft places a strong emphasis on responsible AI. Recognizing the potential risks associated with generative models, Microsoft has implemented measures to guard against abuse and unintended harm. This includes a rigorous application process for access, content filters to screen out harmful content, and providing responsible AI implementation guidance to its users.

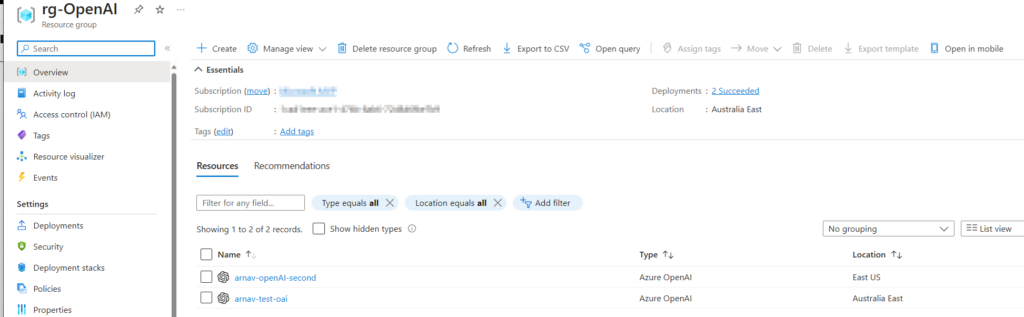

Azure AI Studio:

Azure OpenAI Studio’s Chat Playground

Azure OpenAI Studio offers a unique feature known as the Chat playground, designed to provide users with a hands-on, no-code approach to explore the capabilities of OpenAI. This interactive environment allows users to quickly iterate and experiment, making it easier to understand and utilize the power of models like GPT-35-Turbo and GPT-4. The playground is equipped with an Assistant setup dropdown, where users can select from pre-loaded System message examples. These system messages play a crucial role as they guide the model on its behavior, ensuring that the generated responses align with the desired context and instructions.

One of the standout features of GPT-35-Turbo is its unique prompt structure. Unlike traditional models, GPT-35-Turbo employs special tokens to clearly delineate different parts of the prompt. Each prompt commences with a system message, serving as a primer for the model by embedding context or providing specific instructions. This ensures that the model has a clear understanding of the user’s intent. Following this, the prompt contains a series of messages exchanged between the user and the assistant. The assistant’s response, generated based on the provided context, is then returned within specific tokens, making it easy to identify and extract. This structured approach enhances the accuracy and relevance of the model’s responses, ensuring a more seamless interaction for the end-user.

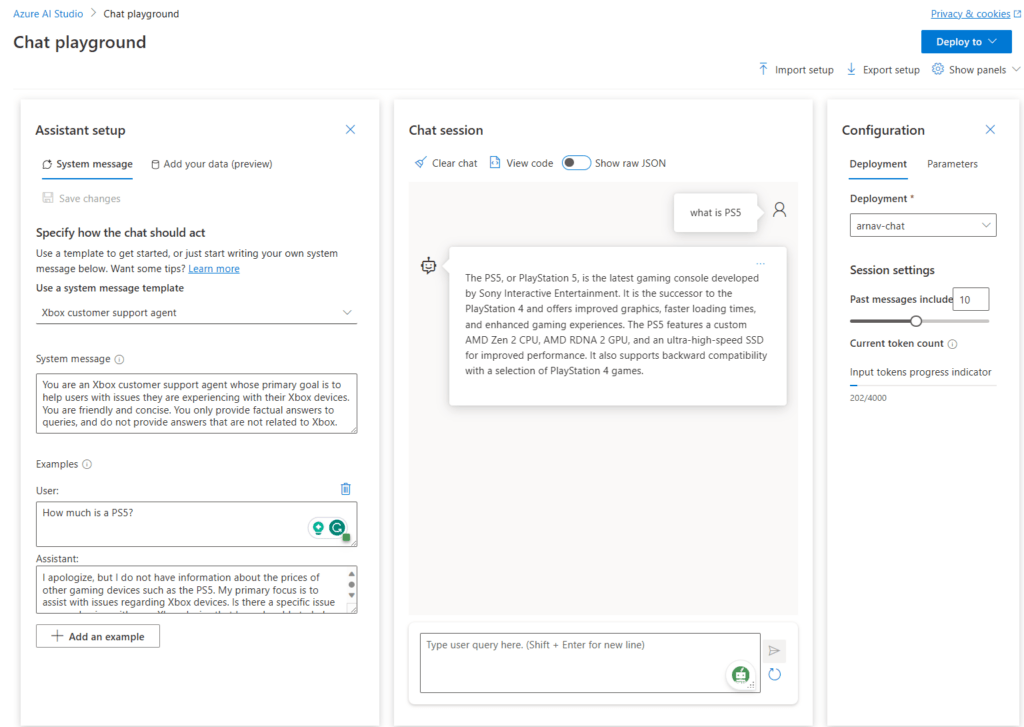

Completions Playground in Azure OpenAI Studio

The Completions Playground, found within Azure OpenAI Studio, is designed as an interactive environment for users to directly engage with Azure OpenAI’s capabilities. It offers a straightforward, no-code approach, making it accessible for both beginners and experts. At its core, the playground is essentially a text box where users can submit prompts to generate completions. This direct interaction allows users to quickly iterate, experiment, and understand the potential and versatility of the models available.

Users can begin their exploration by selecting from a few pre-loaded examples, providing a starting point for those unfamiliar with the system. For those who have specific requirements or tasks, the playground offers the flexibility to create custom prompts. If a resource doesn’t have a deployment, the platform offers guidance with a “Create a deployment” option and a step-by-step wizard to further improve the user experience. This ensures that even those unfamiliar with model deployment can easily set up and get started.

Beyond simple text generation, the Completions Playground provides a range of configuration settings. Users can adjust parameters like temperature, which controls the randomness of the generated output, and pre-response text, allowing for more tailored results. These settings empower users to fine-tune the model’s behaviour to better suit their specific needs.

In action:

Azure OpenAI Studio’s DALL·E Playground

Azure OpenAI Studio introduces the DALL·E playground (Preview), a dedicated environment for users to explore the image generation capabilities of the DALL·E model. This playground offers a no-code approach, allowing users to simply input a text prompt and generate corresponding AI-created images. The process is straightforward: users enter their desired image prompt into a text box and select “Generate.” Once the AI processes the prompt, the generated image is displayed on the page. Notably, Azure OpenAI has integrated a content moderation filter within the image generation APIs. This ensures that if a prompt is recognized as potentially harmful, the system will not produce an image in response.

Azure OpenAI places a strong emphasis on user safety. The DALL·E playground comes equipped with a content moderation filter, ensuring that prompts identified as harmful content do not result in generated images. This proactive approach ensures a safe and respectful user experience. Additionally, for those looking to integrate this capability into their applications, the playground provides valuable resources. Users can view pre-filled code samples in languages like Python and formats like cURL. This feature bridges the gap between experimentation in the playground and real-world application, making it easier for users to transition their insights into actionable projects.

In action:

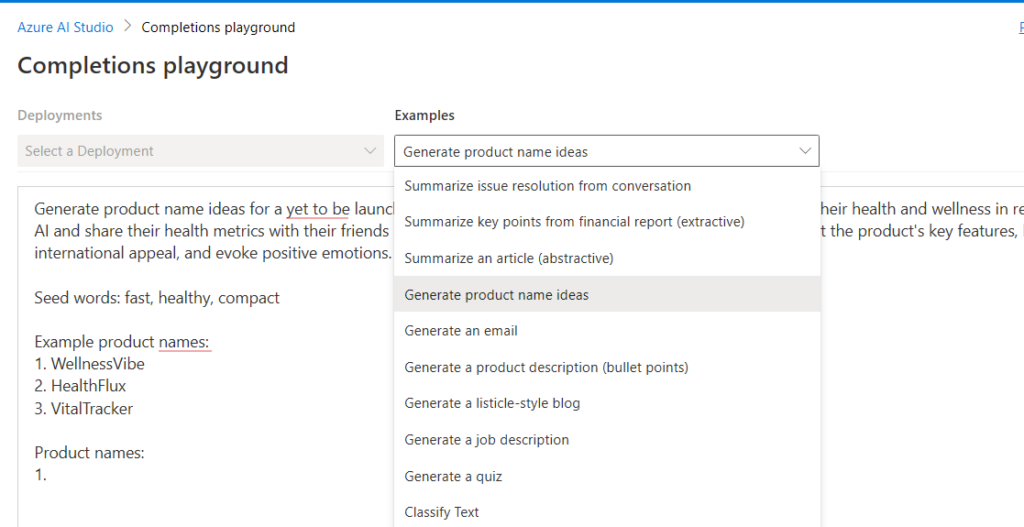

Access To Azure OpenAI:

https://aka.ms/oai/access

Additional Resources and Next Steps:

https://learn.microsoft.com/en-us/azure/ai-services/openai/

FAQ – Using Azure OpenAI Service

Q: What is Azure OpenAI Service?

A: Azure OpenAI Service is a service provided by Microsoft Azure that allows users to access and use OpenAI’s powerful language models through an API.

Q: How can I get started with Azure OpenAI Service?

A: To get started with Azure OpenAI Service, you can apply for access through the Azure portal. Once you have access, you can use Azure OpenAI Service to deploy and use language models for various use cases.

Q: What are some use cases for Azure OpenAI Service?

A: Azure OpenAI Service can be used for a variety of use cases, such as natural language processing, generative AI, embeddings, semantic search, summarization, and more.

Q: Can I use Azure OpenAI Service with my Azure subscription?

A: Yes, Azure OpenAI Service can be used with an Azure subscription. It is a service provided by Microsoft Azure.

Q: How can I deploy a model using Azure OpenAI Service?

A: To deploy a model using Azure OpenAI Service, you can use either Azure portal or the OpenAI API to deploy and manage your models.

Q: What resources are available for me to learn more about Azure OpenAI Service?

A: To learn more about Azure OpenAI Service, you can visit Microsoft Learn and explore the documentation and tutorials provided by Microsoft Azure.

Q: How can I access Azure OpenAI Service?

A: Once you have been granted access to Azure OpenAI Service, you can access it through the Azure portal or programmatically using the OpenAI API.

Q: What is Responsible AI?

A: Responsible AI is a concept that emphasizes the ethical and responsible use of AI technologies, such as ensuring fairness, transparency, and accountability in AI systems. Azure OpenAI Service promotes the use of Responsible AI practices.

Q: What is GPT-3?

A: GPT-3, which stands for Generative Pre-trained Transformer 3, is a state-of-the-art language model developed by OpenAI. It is known for its ability to generate human-like text and comprehend natural language.

keywords: take advantage of the latest completions api apply for access to azure in limited access gpt-3.5 codex for technical support azure openai models security updates ai models azure openai resource are resource from the azure portal api calls using azure; using azure.ai.openai; using gpt-4 and gpt-4-32k using static system.environment; string endpoint